Afraid of AI in Networking? Turn Those Fears Into an Adoption Plan

Network operations is entering a new phase: not just “AI for alerts,” but AI that can explain, recommend, and execute workflows across your existing tooling. The reason many teams are still hesitant is equally simple: the network is the business. When AI touches telemetry, configs, tickets, and change workflows, the fears are legitimate — and ignoring them is the fastest way to stall adoption.

Fear #1: “If I feed AI my network data, I’ll leak something sensitive.”

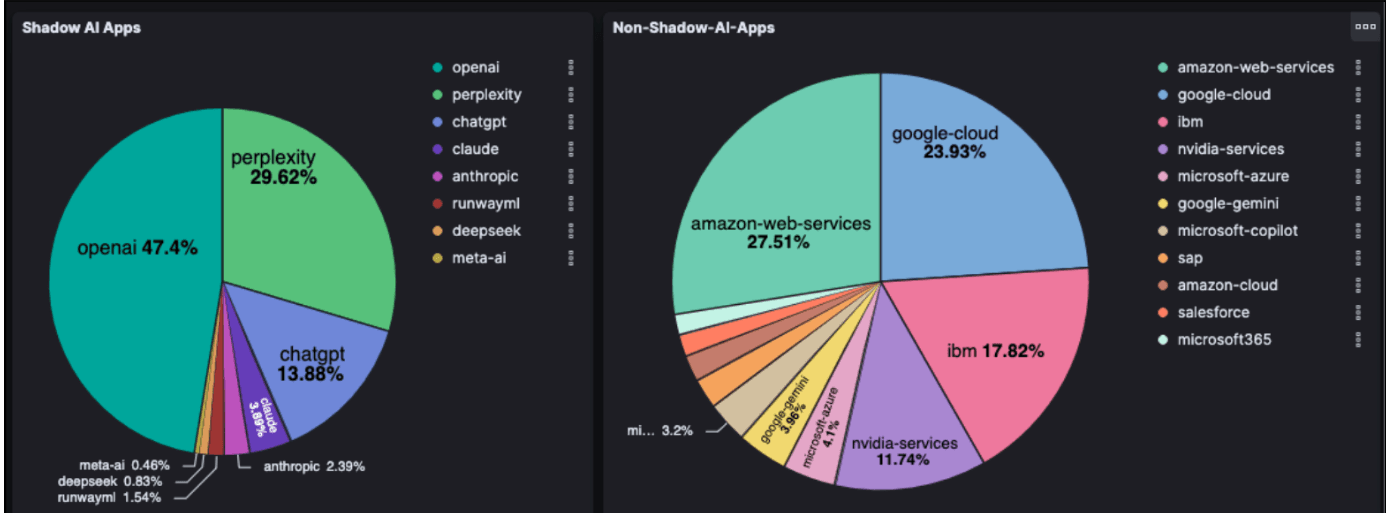

The challenge isn’t just employees pasting sensitive content into an AI chat window. AI traffic doesn’t behave like traditional enterprise application traffic — it’s frequently encrypted end-to-end, API-driven, spans hybrid clouds, and includes both sanctioned and unsanctioned (“shadow AI”) usage. That makes “who is sending what to which AI service” hard to answer with legacy monitoring tools.

Shadow AI introduces real risks: source code, credentials, and customer data uploaded to unapproved tools; regulated data leaving controlled systems; proprietary details exposed in prompts; and unmonitored interactions becoming vectors for prompt injection. You can’t protect what you can’t see.

What good looks like: Real-time, application-aware visibility into AI usage across hybrid environments — context-aware identification of AI services, metadata-enriched inspection, and unified dashboards so security and network teams can enforce policy consistently.

A simple starting progression:

- Define what’s allowed — list approved tools and data sharing limits

- Establish visibility first — measure current AI usage including shadow AI

- Classify and control high-risk data flows (credentials, customer data, regulated data)

- Make it easy to do the right thing — provide a sanctioned path so employees don’t route around IT

Figure 1: Shadow and Non-shadow AI Apps Identification using Aviz Elastic Node

Privacy governance becomes an enabler: teams adopt AI with control, rather than pretending AI use isn’t happening until a leak forces the issue.

Fear #2: “Our network is mission-critical. How do we trust AI without risking an outage?”

Even after improving visibility and privacy guardrails, a second fear surfaces fast. The practical answer isn’t a debate about “copilot vs. agent” — it’s a staged trust ladder.

Stage 1 — AI that reads and explains (no production changes) This is the lowest-risk path to value because you’re not touching the control plane. Examples: incident summarization and timeline reconstruction, correlation and hypothesis generation, drafted troubleshooting workflows. Trust goal: faster, more consistent operator decisions without autonomous change.

Stage 2 — AI that orchestrates low-risk workflows (with guardrails and approvals) Once Stage 1 proves useful, expand to AI-driven workflow orchestration — still governed, still auditable. Good actions here: automated read-only evidence gathering, pre-change validation runs, change-plan drafting for human approval. Trust goal: reduce toil and improve consistency while keeping humans accountable for high-impact actions.

Stage 3 — AI that acts (only inside a safety cage) This is where mission-critical fear peaks. If you allow execution, constrain it tightly: maintenance window enforcement, blast radius limits (one device/site/domain), mandatory rollback steps, full audit trails, and a clear decision on where AI runs based on risk tolerance. Trust goal: AI executes repeatable, bounded actions safely — never free-running automation.

The connecting principle from Fear #1 applies here too: you can’t govern what you can’t see, and you can’t trust what you can’t constrain.

Fear #3: “Even if it works, I’ll lose control of governance, cost, and accountability.”

This is the leadership fear. Gartner research found 90% of CIOs expressed concern about losing control over AI systems. On the operational side, this shows up as unapproved tool usage, AI-driven changes without clear accountability, tool sprawl, and unpredictable costs. Gartner data also found 90% of CIOs cite out-of-control costs as a major barrier to AI success.

How to stay in control:

- Assign an accountable owner. Appoint an AI governance leader with cross-functional collaboration across cybersecurity and data stakeholders.

- Establish lighthouse principles. For example: “AI can recommend any change, but cannot execute L3/L4 policy changes without approval.” “All AI actions must be replayable and reversible.” “No production secrets may be submitted to public GenAI.”

- Create an operating model for agents. Define who authors workflows, who approves them, who owns failures, and how workflows are reviewed and deprecated.

- Build cost controls in from day one. Usage quotas, cost dashboards by team and use case, architecture choices that reduce repeated API calls, and PoCs that test cost scaling — not just technical feasibility.

With 48% of CIOs now solely responsible for AI efforts, networking leaders need clear answers to: What workflows will AI touch first? What data is allowed where? What’s our trust model? How do we prevent shadow AI while enabling productivity?

Closing Thought

Each fear maps to a concrete requirement you can build into your rollout. Fear #1 (Privacy): start with visibility and data boundaries. Fear #2 (Mission-critical trust): earn autonomy in stages — read, then orchestrate, then act inside guardrails. Fear #3 (Loss of control): assign an owner, define principles, and manage cost as a first-class constraint.

The leaders who win this transition won’t move fastest or block hardest. They’ll move deliberately — starting small, proving value, and expanding AI responsibility only when the system is observable and governed. In networking, “AI-ready” doesn’t mean more automation. It means automation you can explain, constrain, and trust.